Viral videos travel fast. Sometimes they are real, sometimes they are clipped out of context, and sometimes they are fully synthetic. Either way, the damage happens when people share first and verify later. That is exactly where video provenance helps.

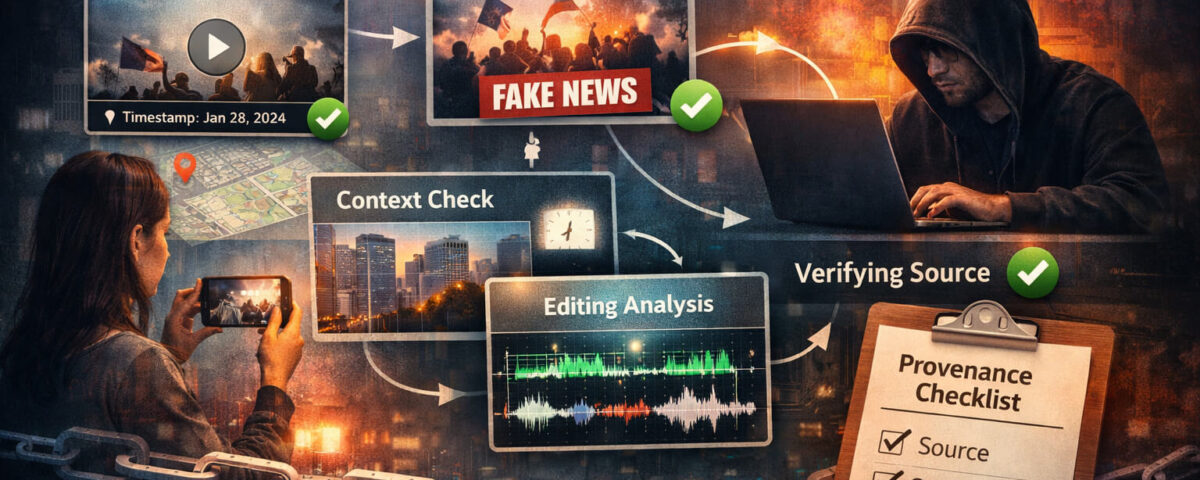

Video provenance is the practical process of confirming where a video came from, how it moved across the internet, and whether the story attached to it matches what actually happened. It is not a single tool and it is not a magic stamp that says “real.” It is a set of habits you can repeat every time you see a clip that could mislead someone.

This guide gives you a clear, repeatable workflow that works for journalists, creators, brand teams, and everyday viewers. You will learn how to track the earliest upload, confirm context, compare versions, and use verification signals like content credentials and C2PA metadata when they exist. You will also learn when to use Detect AI Video as a second opinion, and when you should not trust any single signal.

The Quick Takeaway That Saves You Time

If you only remember one thing, remember this: provenance is a chain, not a label. The fastest way to confirm real footage is to lock the claim, find the earliest upload, check context, and compare versions. When the stakes are high, you add deeper checks and cross-confirmation.

What Video Provenance Means (and What It Does Not)

Video provenance answers three simple questions:

- Who first published the video, or what is the earliest known source

- What happened to the video along the way (edits, reposts, re-encodes, overlays)

- Does the caption match the real place, time, and event shown in the footage

What provenance is not:

- Not the same as “this video looks real”

- Not the same as “this account seems trustworthy”

- Not the same as “a tool said it is AI”

A realistic workflow treats every signal as a clue, then confirms the story with multiple checks.

When Provenance Matters Most

Not every video deserves an investigation. Provenance matters most when a clip can cause harm, trigger panic, damage reputations, or influence decisions. Common high-risk situations include:

- Breaking news and crisis clips

- Celebrity or politician “statements” that could be fabricated

- Videos tied to money, donations, investment claims, or giveaways

- Medical or safety advice “caught on camera”

- Brand or product claims that could mislead customers

- Private groups where scams spread quickly, including WhatsApp scams

If a clip is high-impact and you cannot confirm the basics in a few minutes, treat it as unverified and do not share it as fact.

The Provenance Chain in Plain English

Think of every viral video as a chain with several links:

- Original capture

The video was recorded, generated, or assembled somewhere. - First publication

The earliest public upload appears on a platform, a website, or a messaging app. - Reposts and edits

People crop, trim, add subtitles, stitch scenes, or re-upload at different quality levels. - Narrative attachment

A caption or headline is attached, often changing as it spreads.

A strong provenance chain looks like this: the earliest source is identifiable, the context matches, and the clip’s versions are consistent with normal re-uploads. A weak provenance chain looks like this: unclear origin, dramatic caption, many low-quality copies, and no credible confirmation.

Step 1: Lock the Claim Before You Investigate

Most people waste time because they do not define what they are trying to confirm. A caption like “Look what happened today in the city” is vague. Turn it into one clean claim:

- “This video shows a protest in Berlin on January 28.”

- “This clip shows a celebrity endorsing a crypto giveaway.”

- “This footage shows a runway crash that happened this morning.”

Now you have something you can verify. If you cannot write the claim in one sentence, the post is probably mixing multiple claims at once.

Tip: write down the claim in a note. Your job is to test that claim, not the emotions around it.

Step 2: Find the Earliest Upload (Fast Methods That Work)

The fastest way to break a viral hoax is to find the earliest known upload. Many “new” videos are old videos recycled with a new caption.

Here is a practical approach:

Check the obvious first

- Open the account that posted it. Is it a repost account

- Look for the earliest timestamp on that profile. Is the account new

- Check whether the post is a re-upload (watermarks, captions baked into the video, platform logos)

Search for the same video elsewhere

You are looking for older copies, especially longer versions. Common signals that help you spot an earlier upload:

- A longer clip exists with extra seconds before or after the viral cut

- The viral version is cropped to hide a watermark

- The viral version is mirrored or speed-changed to avoid detection

Use “version hunting” instead of perfect reverse search

Video reverse search is not always reliable, so treat it like version hunting:

- Search short phrases from the caption

- Search the name of the event or location mentioned

- Search for distinctive visual elements (“blue stage lights”, “red banner”, “stadium screen”)

If the clip is tied to a news event, your news verification workflow becomes the backbone here. Usually you can find the earliest upload through credible outlets or direct witnesses once you know what to search.

Step 3: Verify Context (Most Fakes Fail Here)

Even when the video itself is real, the caption might be false. Context verification is often more important than “AI detection.”

Check these context anchors:

Time

- Daylight vs night, shadows, and lighting direction

- Seasonal cues (clothing, trees, weather)

- Any screens showing date or time, but treat screens as editable

Place

- Street signs, languages, license plate styles, public transport logos

- Landscape, architecture, storefront brands

- Flags, uniforms, and local emergency markings

Event

- What is the claim about the event

- Does the crowd behavior match the narrative

- Are there multiple angles posted by other users

If the caption says “today” but you find older copies from months ago, the claim is false even if the footage is real.

Step 4: Compare Versions to Spot Edits

When you have two or more copies, you can often prove manipulation without any advanced tools. Compare them side by side:

- Does one version cut away right before a key moment

- Are subtitles different

- Is the audio different

- Is the face or voice clearer in one version

Edits do not always mean deception. Sometimes creators trim for length. But when the edit changes the story, it becomes a trust problem. If you need a deeper dive on visual editing clues, your article on fake video is a natural next step for readers.

Step 5: Separate AI Artifacts from Normal Compression

This is where many reviewers make mistakes. Low-quality video can look “AI” simply because it is heavily compressed. You want to separate three things:

- Platform compression (blocky noise, smearing, low bitrate)

- Editing artifacts (bad masking, sloppy cutouts, mismatched color grades)

- Synthetic artifacts (odd motion, inconsistent detail, unnatural transitions)

AI-generated clips often show issues in motion continuity, physics, and fine detail consistency from frame to frame. However, modern generators can look very clean, and real videos can look messy. That is why this step is only one signal, not the final answer.

If readers want a deeper breakdown of synthetic cues, deepfake detection and AI generated video are strong internal links when the moment fits naturally.

Step 6: Check for Provenance Tech Signals When Available

Sometimes the best proof is not “how it looks,” but “how it was made and published.”

Content Credentials

content credentials is a concept used to attach provenance information to media, such as who created it and how it was edited. If the credentials are present and trusted, they can support a clear origin story. If they are missing, that does not automatically mean the media is fake. Many platforms and workflows do not include them.

C2PA Metadata

C2PA metadata is a standard for content provenance that can include a signed history of edits and the tools used. Think of it like a tamper-evident label for media. If a clip has valid C2PA data, it can provide strong evidence about origin and edits. If the chain is broken, or if the metadata is stripped, you lose that signal.

Important limitation: metadata can be removed by re-uploads, screen recordings, and platform processing. So the absence of credentials is common, especially for viral clips.

Step 7: Use Detect Video AI as a Practical Second Opinion

When a clip is suspicious, run it through Detect AI Video after you complete the basic provenance steps. This order matters. Provenance checks often solve the case quickly, while AI checks help you decide whether deeper investigation is worth your time.

Use Detect Video AI to:

- Flag potential synthetic patterns you might miss at first glance

- Add confidence when other signals are unclear

- Prioritize which clips need deeper verification

Do not use it as the only proof. A smart workflow uses tool output as a signal, then confirms with context, source tracing, and version comparison.

A 5 Minute Provenance Checklist You Can Reuse

If you are short on time, follow this checklist:

- Define the claim in one sentence

- Identify the earliest known upload or oldest matching copy

- Confirm time and place using visible cues

- Compare at least two versions if possible

- Check whether the caption matches the actual footage

- If still uncertain, use Detect AI Video as a second opinion

- If you cannot confirm, label it unverified and do not spread it as fact

This is also a great foundation for helping readers avoid financial traps, especially when the clip is part of scam videos campaigns.

High Risk Patterns You Should Treat as Suspicious

Some patterns show up again and again in manipulative media:

- “Breaking” clips with no source, only repost chains

- Celebrity clips that push urgency: “last chance,” “limited,” “DM me”

- Emotional manipulation: fear, anger, or shock captions that demand sharing

- Videos tied to money, giveaways, crypto, or “support the cause” links

- Private-message distribution where verification is difficult, including WhatsApp scams

- AI-based impersonation tactics, where AI impersonation becomes the core risk

When you see these patterns, slow down. The fastest way to avoid being manipulated is to refuse to act under pressure.

What to Do If You Cannot Confirm Provenance

Sometimes you will not be able to prove a video is real or fake quickly. That is normal.

Here is the safest approach:

- Do not share it as fact

- If you must share for awareness, label it clearly as unverified

- Share what you checked and what you could not confirm

- Save the link to the earliest version you found

- Encourage others to verify through credible sources

This is the difference between responsible sharing and accidental amplification.

Quick Wrap-Up

Video provenance is not about becoming a forensic expert. It is about building a simple habit: define the claim, find the earliest upload, verify context, compare versions, and only then lean on tools like Detect AI Video for extra signal. When you follow that loop, most viral hoaxes collapse quickly, and real footage becomes easier to trust with confidence rather than emotion.

FAQ

What is video provenance in simple terms

Video provenance is the history of a video: where it came from, how it was edited or re-uploaded, and whether the story attached to it matches reality.

Can a real video still be misleading

Yes. A real clip can be old, cropped, or mislabeled. Provenance is often about verifying context, not just detecting manipulation.

Does missing metadata mean a video is fake

No. Metadata is frequently stripped by platforms and re-uploads. Missing C2PA metadata or credentials is common and does not prove anything by itself.

What is the fastest way to verify a viral clip

Find the earliest upload, confirm the claim, and check context cues like place, time, and event. Most hoaxes fail these checks.

How reliable are AI detection tools

They are useful signals, not final proof. Use Detect AI Video as a second opinion after basic provenance checks, then confirm with source and context evidence.

What should I do if I cannot confirm the video

Do not share it as fact. If you share it at all, label it unverified, explain what you checked, and point people to credible sources for confirmation.