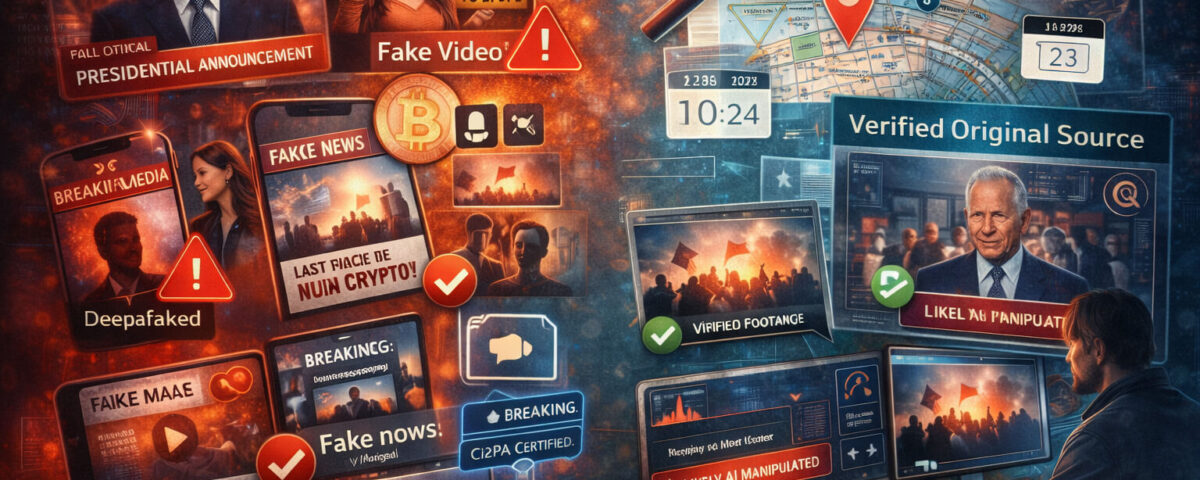

Synthetic media is no longer a niche topic for researchers or filmmakers. It is already part of everyday content. Some synthetic media is harmless or even helpful, like AI-assisted editing for accessibility. Other synthetic media is designed to mislead, scam, or manipulate. The problem is not that AI exists. The problem is that video now looks believable by default, even when it is not.

This guide gives you a practical way to stay safe without becoming paranoid. You will learn what synthetic media includes, why it is spreading, what risks matter most, and how to verify clips with a clear, repeatable workflow. When you need an extra signal, you can also run a clip through Detect AI Video to flag likely manipulation faster.

What Synthetic Media Means (And What It Includes)

Synthetic media is any media that is generated, altered, or reconstructed using AI or automated techniques in a way that changes what the audience believes they are seeing or hearing. It includes:

AI generated video. Entire scenes or people are generated from prompts or reference images.

AI edited video. Real footage is modified with AI, like swapping faces, changing backgrounds, or removing objects.

Deepfakes. A common subset where identity is manipulated, typically face swaps or face reenactments.

Voice cloning. Audio is generated to sound like a real person.

Lip-sync and dubbing tools. The mouth movements are altered to match new audio, sometimes in another language.

Synthetic narration and “talking head” content. A generated speaker delivers scripted content with realistic facial motion.

Hybrid clips. A real video with synthetic audio, or synthetic visuals layered on real footage.

Synthetic media is not always malicious, but it is easy to weaponize because it can create “evidence” quickly.

Why Synthetic Media Is Spreading So Fast

Three forces are pushing synthetic media into every corner of the internet.

First, the tools are cheap, fast, and easy. You do not need a studio anymore.

Second, platforms reward engagement. Viral clips travel farther than careful explanations.

Third, the line between editing and fabrication has blurred. Many creators use AI to polish videos, so viewers get used to the “AI look” and stop noticing warning signs.

That combination makes verification a basic digital skill, like checking a sender’s email address before you click a link.

The New Risks You Actually Need to Care About

Not every risk is equally likely, and not every risk deserves your attention. These are the ones that matter most for real people and real businesses.

Misinformation and False Context

A clip can be real and still be misleading. For example, a real video reused with a different caption can trigger outrage or panic. Verification is often about context, not pixels.

This is exactly why news verification matters. The first question is often not “Is this edited?” but “Is this clip being used to claim something it does not prove?”

Scam Ads and Influencer Fraud

Synthetic media is now a scammer’s dream. Fake endorsements, fake testimonials, and fake “proof” videos can push people into buying useless products or sharing private information.

If your site covers or fights fraud, building content around scam videos is not just relevant, it is necessary.

Impersonation and Reputation Damage

A fake clip of a person saying something offensive can spread in minutes. Even after it is disproven, the damage may remain. Public figures get hit often, but normal people are targets too.

Financial Fraud and Account Takeovers

Some attacks use video as the hook. You see a clip, you trust it, you click a link, or you send money. This is especially common in private-message channels like WhatsApp scams, where trust is higher and verification is lower.

Legal and Compliance Risks

If you run a business, synthetic media can create brand risk. Sharing or embedding an unverified clip can trigger legal issues, takedowns, or partner complaints. A simple verification workflow reduces that risk.

Synthetic Media vs Deepfakes: What’s Different

Many people use “deepfake” as a synonym for all synthetic content, but synthetic media is broader.

Deepfakes usually focus on identity. They alter who appears to be speaking or acting.

Synthetic media includes identity manipulation, but also fully generated scenes, AI-enhanced “reality,” synthetic audio, and fabricated context.

In practice, your verification workflow should not depend on terminology. It should depend on what the clip is trying to make you believe.

The Most Common Synthetic Media Patterns You’ll See

You do not need to memorize technical details. You need a short list of patterns that show up often.

Motion That Feels Smooth in the Wrong Way

AI video can look too stable. Micro-jitter, camera shake, and natural imperfection are sometimes missing. Movement can also drift in strange ways, especially in hair, jewelry, or fabric.

Weird Hands, Accessories, and Edges

Even modern generators still struggle with hands and complex interactions. Watch rings, glasses frames, and fingers near the face. Edges may shimmer or “crawl” when the subject moves.

Reflections and Shadows That Don’t Agree

If the lighting is strong, shadows should be consistent. Mirrors, windows, and glossy surfaces often reveal problems. A reflection might lag, blur, or not match the subject.

Audio That Sounds Right but Feels Off

Voice deepfakes often get pronunciation right but miss natural rhythm. Listen for unnatural pauses, perfectly even volume, or emotional mismatch.

Overly Perfect Skin and Texture

Some synthetic faces look too clean, with smoothing that resembles beauty filters but goes further. Real cameras capture tiny texture and uneven detail.

These signs alone do not prove a clip is fake, but they tell you when to slow down and verify.

A Fast Verification Framework (Under 5 Minutes)

Here is the workflow that works for almost any suspicious video. You can use it as a checklist.

Step 1: Pause and Define the Claim

What is the clip trying to prove? Write it in one sentence.

If you cannot define the claim, you cannot verify it.

Step 2: Capture the Evidence You Have

Save the URL, timestamp, and a screenshot of the post and caption. If it disappears later, you still have context.

Step 3: Find the Earliest Source

Search for the first upload, not the most popular repost.

Check the account posting it. Is it a known source or a random page?

Look for earlier versions on other platforms.

Search key frames. A simple reverse-image search using a screenshot can uncover older posts.

Search the exact quote or headline in the caption.

If you find an earlier upload with a different caption, that is often your answer.

Step 4: Check Context Signals

Ask the boring questions.

When did this happen?

Where did this happen?

Who is in the video and how do we know?

Is there a longer version?

Is there secondary footage from another angle?

Most viral hoaxes collapse here.

Step 5: Cross-check with Credible Sources

If it is a major event, reliable outlets and official accounts usually mention it. If no credible source has any reference, treat the clip as unverified.

Step 6: Use Tools as Extra Signals, Not as Proof

Now is the moment to use an AI tool. Run the clip through Detect AI Video and see whether it flags suspicious patterns. Use the result as one input, not the final verdict.

A tool can help you prioritize what to investigate, but it cannot replace context checks.

When an AI Detector Helps (And When It Does Not)

AI detectors can be useful when the clip has visual artifacts, AI-style motion, or synthetic texture. They may also help when a video has been generated end-to-end.

They struggle in these situations:

Heavy compression, like reuploaded videos on social platforms.

Very short clips with limited visual detail.

Screen recordings, where the video is already “a copy of a copy.”

Clips that are mostly real but have small edits.

So the best approach is: use a detector to speed up your suspicion, then confirm with verification steps.

Provenance and Trust Signals That Beat Guessing

Verification becomes much easier when a clip carries a reliable history.

Platform Labels and Disclosure

Some platforms label AI content, but labels are inconsistent. They can be missing, wrong, or easy to bypass. Treat labels as helpful, not definitive.

Content Credentials and Provenance

This is where content credentials matter. The idea is simple. A creator’s tools can attach a record of what happened to the file, like edits, exports, and identity information. When available, it is one of the strongest signals you can check.

C2PA Metadata

C2PA metadata refers to a standard for signing and tracking the origin and edits of media. If a video has valid C2PA data and it matches a trusted source, that is powerful evidence.

Important note: provenance can be missing for innocent reasons, especially for older clips or exported files. Missing metadata is not proof of a fake. It just means you need other checks.

Verification Playbooks for Real-World Scenarios

Viral News Clips

If a clip claims a breaking event, prioritize time and location checks. Search for the earliest upload and compare captions. Use news verification logic: identify the claim, find the original, and confirm context before sharing.

TikTok Trends and Remix Culture

Short-form platforms are full of edits, effects, and filters. That makes them fertile ground for synthetic media. If a clip is “too perfect,” or claims something shocking, treat it like a verification task, not entertainment.

This is why TikTok deepfakes deserve their own attention. Many fake clips look believable because viewers expect heavy editing on that platform.

Influencer Ads and Promotions

If you see a celebrity “endorsement,” assume it might be synthetic until you confirm it. Check the brand’s official channels. Look for the same promo on verified accounts. If the ad is only on a random page, be careful.

This playbook overlaps heavily with scam videos, where fake endorsements are one of the most common tactics.

Forwarded Videos in Private Messages

Private messages create urgency. Scammers rely on it. If a video comes with “share this now” language, slow down. Ask for the original source. If nobody can identify where it came from, do not trust it.

This is the pattern behind many WhatsApp scams that spread quickly inside families and groups.

How to Build Synthetic Media Literacy for Your Team

If you manage a site, a community, or a business, treat verification like a process.

Create a simple rule: no reposting unverified clips.

Create an escalation step: if a clip could harm someone, it must be verified by two independent checks.

Create a documentation habit: save URLs, screenshots, and your reasoning.

This makes your decisions repeatable. It also protects you if someone asks later why you shared or rejected a clip.

Mistakes That Make People Fail Verification

Trusting one signal. A single tool result or a single “expert” thread is not enough.

Assuming “HD means real.” AI can generate sharp video.

Ignoring context. Many hoaxes use real footage with a fake caption.

Sharing “just in case.” That is how misinformation spreads.

Overconfidence. The best verifiers stay cautious.

A Clear Way to Stay Safe Without Paranoia

Synthetic media will keep improving. The goal is not to catch every fake by eye. The goal is to build habits that reduce your risk and prevent you from spreading misinformation.

If you do one thing, do this: always find the earliest source and confirm context. If a clip matters, use tools like Detect AI Video as an extra layer, then rely on cross-checks to confirm the story.

FAQ: Synthetic Media and Verification

What is synthetic media in simple terms?

Synthetic media is video or audio that is created or altered using AI so it can change what people think is real.

Are deepfakes the same as synthetic media?

Deepfakes are one type of synthetic media, usually focused on identity manipulation. Synthetic media also includes fully generated scenes and AI-edited footage.

How can I verify a suspicious video quickly?

Define the claim, find the earliest upload, check context, cross-check with credible sources, and then use a detection tool as an extra signal.

Can AI detectors prove a video is fake?

No. They provide signals and probabilities. You still need context checks and independent confirmation.

What is the most reliable proof a video is real?

There is rarely a single proof. Strong evidence includes a trusted original source, consistent context, secondary footage, and reliable provenance records.

What should I do if I already shared a suspicious clip?

Update your post, add a correction, and share the verified source. If it was harmful, remove it and explain why.